Meta learning maml11/14/2023

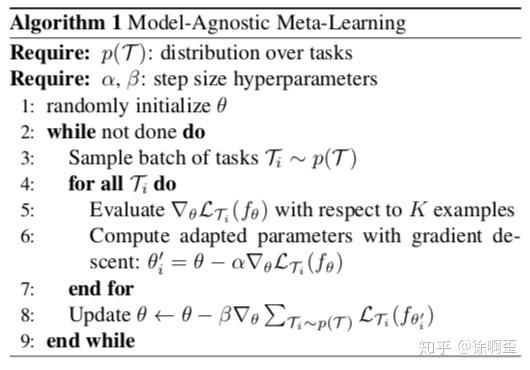

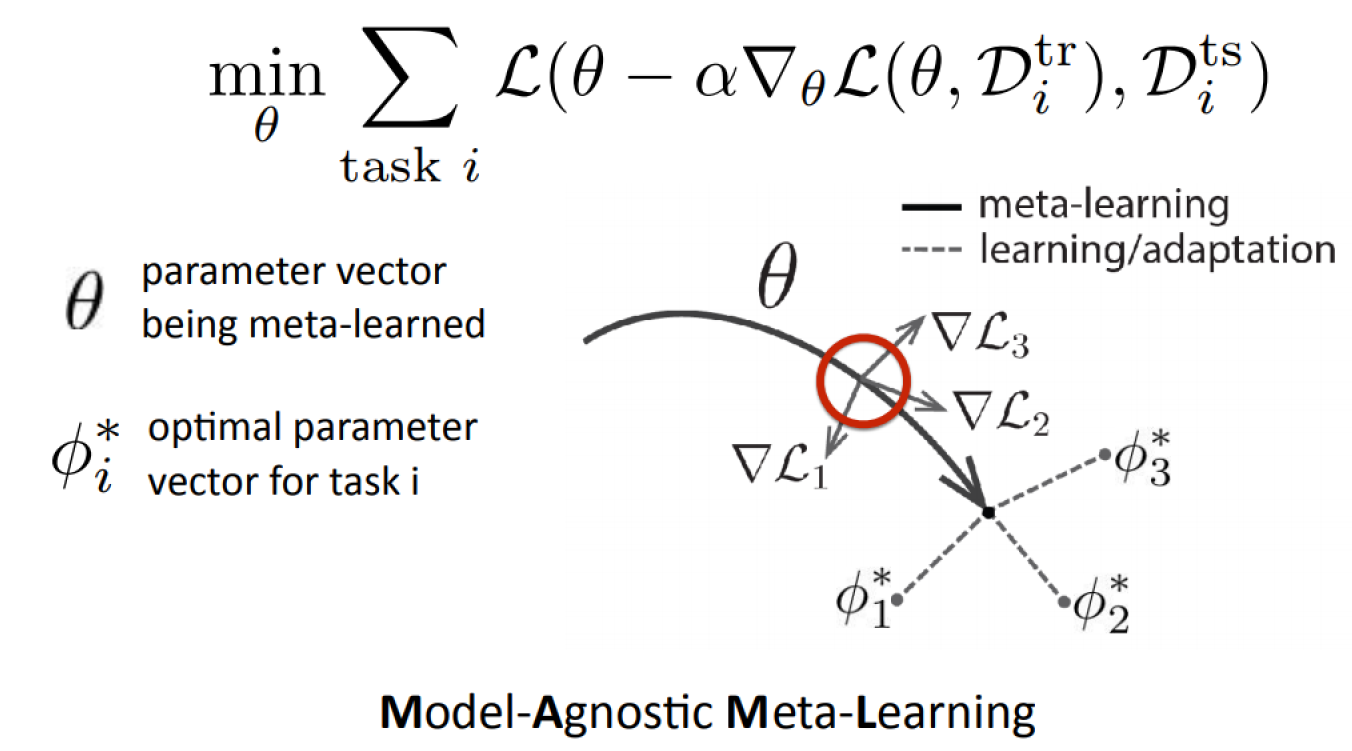

Because of that, the original authorsĭescribed it as a "transpose" of the well-known MNIST dataset , It contains 1623 different characters across 50 alphabets, with each characterīeing represented by 20 instances, each drawn by a different person. Omniglot contains 1623 different characters across 50 alphabets, with each characterīeing represented by 20 instances, each drawn by a different person. Prominent example of a few-shot learning task, whose symbols are The small exercise from the beginning, which we offer either as a \(20\)- or \(5\)-way-1-shot problem, is a If you are presented with \(N\) samples and are expected to learn a classificationĬlasses, we speak of an \( M \)-way-\(N\)-shot problem. The learning of this meta-knowledge is called "meta-learning".Īchieving rapid convergence of machine learning models on a few samples is known as Not obtained from a single task but the distribution of tasks. This similarity assumption allows the model to collect meta-knowledge The idea in its core is to derive an inductive biasįrom a set of problem classes to perform better on other, newly encountered, problem-classes. We can pretrain models on tasks that we assume to be similar to the target tasks. While clearly, one sample is not enough for a model without prior knowledge, While introducing these two fields to you, weĪlso equip you with the most important terms and concepts we will need along the rest of the article. In two fields that each address one of the above requirements. Model-agnostic meta-learning, a method commonly abbreviated as MAML, will be the central topic of this article. (b) using that to generalize well on only a few samples.(a) Obtaining as much prior knowledge about the world as possible and.Consequently, we would require algorithms to do the following two things, already Knowledge having learned in other (similar) tasks.Įnabling machine learning methods to achieve the same brings us a step closer to learning on humans' data. Novel concepts "in a vacuum" but are based on a lot of prior It should, however, also be noted that humans do not learn Humans are known to excel at generalizing after seeing only a few Nevertheless, there are good reasons to believe that this is not an inherent issue However, we do not always have enough data available toĬater to this need: A sufficient amount of data may be expensive or even It is well known in the machine learning community that models must be trained with a large number of examples before meaningful predictions can be made for unseen data. Tasks to solve problems like the one above on a human level of accuracy. That attempts to equip conventional machine learning architectures with the power to gain meta-knowledge Model-agnostic meta-learning (MAML), a well-establish method in the area of meta-learning. In this article, we give an interactive introduction to Without realizing that what you are able to do off the top of your head would be pretty impressive to an You can classify them given only a single example, potentially As shown, P-MAML with KD improves the performance of one-shot learning as high as 10% in comparison to that without KD.If you tried the exercise above, you have undoubtedly received a very high accuracy score.Įven though you likely have never seen some of the characters,

Extensive experimental results on three real datasets show that our P-MAML algorithm greatly enhances the accuracy through KD from the teacher network. To the best of our knowledge, this is the first work to consider a portable meta-learning model through knowledge distillation (KD) to learn a good initialization. Moreover, data augmentation and ResNet architecture are employed in the teacher MAML network so as to avoid overfitting and enhance efficiency. We propose a novel approach named portable model-agnostic meta-learning (P-MAML), where valuable knowledge is distilled from a teacher MAML network to a portable student MAML. In this paper, we investigate how to improve the performance of a portable MAML network so that it can be used in handheld devices, such as small robots, mobile phones, and laptops. Recently, model-agnostic meta-learning (MAML) and its variants have drawn much attention in few-shot learning.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed